When two gameplays are no longer the same game. DLSS 5 and the Generative Model

For years, we took something very simple for granted. If two people play the same scene, at the same point, with the same input, they see the same image.

That’s no longer true.

With DLSS 5, we’re not facing yet another evolutionary step in upscaling or frame generation. That chapter closed with previous versions. Here, the very nature of rendering changes. The final image is no longer the direct consequence of the rendered scene, but the result of an inference process. Translated: the GPU no longer just improves what is there. It decides what should be there.

And this breaks something fundamental.

It’s no longer reconstruction, it’s interpretation

In classical rendering, even with ray tracing or path tracing, the result remains a deterministic function. The methods change, the computational cost changes, but the logic is always the same: same inputs, same output.

DLSS 5 introduces a layer that doesn’t reconstruct, but completes. Fills. Interprets.

The wood surface is no longer just the one calculated by the shader. It’s the one the model deems plausible. Skin, reflections, indirect light are not just simulated. They are estimated.

As long as it’s about visual quality, it’s an advantage. When you look at consistency, it becomes a problem.

The critical point of DLSS 5: two gameplays can diverge

Imagine this situation: same build, same scene, same path.

Two players record the same moment.

The gameplay is identical. The hitboxes, collisions, game logic don’t change. But the image does. Not blatantly, but enough to create real differences.

Reflections may appear more pronounced, textures more detailed, and shadowed areas either more closed or more open.

They are micro-variations, but they are variations. And most importantly, they are not guaranteed to be identical between two executions.

You’re no longer looking at a copy of the world. You’re looking at an interpretation of it.

Same prompt, two results

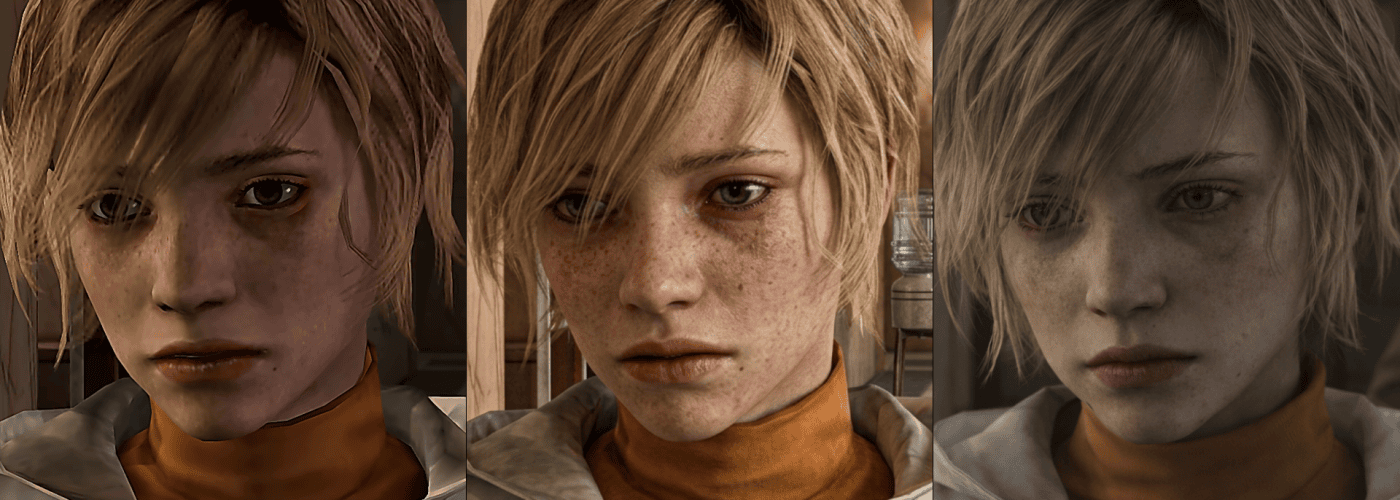

To make this point clear, a simple experiment is enough.

The setup is intentionally minimal, without any attempt to constrain the model or suppress its natural tendency to introduce variation.

Take the same frame from the game, apply the exact same prompt, and run the generation twice.

The result is, unsurprisingly, not identical.

Not because something went wrong, but because the model does not reconstruct: it interprets. Each inference produces a plausible variation, consistent with the scene, but not perfectly overlapping.

This is exactly where the break happens.

The same input no longer guarantees the same output.

And this is the same principle that, when brought into a pipeline like DLSS 5, opens the door to a very real possibility: two executions of the same game play moment may not produce the same image.

Streaming: reality fragments even further

Now add streaming.

The pipeline becomes: game → DLSS 5 → compression → platform → decompression

AI adds details. Compression destroys or alters them. The viewer receives a further reinterpreted version.

Two streamers playing the exact same point can show two different images. And the viewer sees a third version yet.

It’s not a bug. It’s a natural consequence of the system.

Why this really changes things

As long as rendering is deterministic, some implicit guarantees exist:

- A replay is verifiable

- A screenshot is reliable

- A visual bug is reproducible

- Art direction is under control

With a generative system, these guarantees weaken.

They don’t disappear, but they become probabilistic.

QA becomes more complex. Competitive gaming becomes more ambiguous. Artistic control shifts, at least in part, outside the engine.

Can we keep it under control?

The answer is yes, but not for free.

To maintain coherence, constraints need to be introduced. Not on classical rendering, but on the AI itself.

One can force deterministic behavior by introducing a seed tied to the scene and frame. This way, given the same conditions, inference always produces the same result.

One can lock the temporal history, preventing small errors from accumulating and generating visual drift over time.

Critical zones can be separated from free ones. Characters, weapons, gameplay elements remain under strict rendering. The environment can be left to generative freedom.

Semantic constraints can be passed to AI. A face stays a face. A weapon maintains its shape. The rest can vary.

And in replays or competitive environments, the state of the inference itself can be recorded and synchronized, not just inputs and frames.

These are all possible solutions. None are free.

The real direction of DLSS 5 generative rendering

DLSS 5 is not just graphics technology. It’s a philosophy change.

Rendering ceases to be a direct representation of the scene and becomes a mediation between data and perception. The goal is no longer to show what was calculated, but what should appear correct.

As long as it works, “it’s magic”. When comparing two versions of the same reality, you realize it’s no longer the same thing.

For years we discussed resolution, frame rate, ray tracing. Technical, measurable, objective parameters.

Now the question changes.

It’s no longer: “how faithful is the image?”

But: “how shared is it?”

Because the moment two people no longer see exactly the same scene, rendering is no longer just technology. It’s an interpretation.

And at that point, the discussion leaves the technical realm.

My personal consideration

For centuries, philosophers have been asking a simple and unsettling question: are two individuals truly perceiving the same world during observation, or are they just compatible versions of each other?

Until yesterday, it was an abstract doubt. Today, for the first time, we’re starting to have a system that makes this difference concrete, measurable, reproducible.

It’s no longer just a matter of perception. It’s part of the graphics pipeline.